Chapter 1 Selecting a paper

The goal of this stage is to help you select the paper that you will do your reproduction on. You might first consider multiple papers without analyzing them more closely (we refer to these as candidate papers) before moving forward with your declared paper.

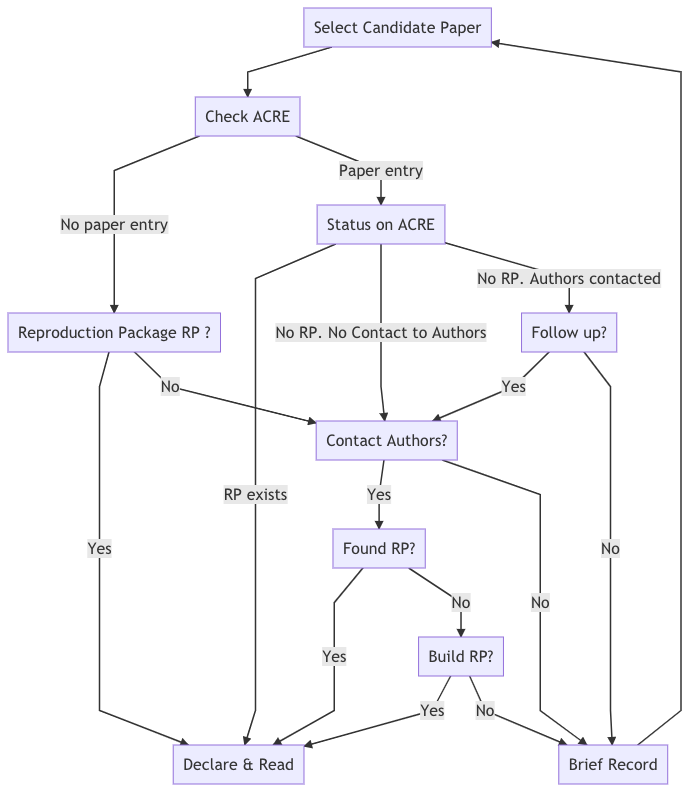

The main difference between a candidate and a declared paper is the availability of a reproduction package. A reproduction package is the collection of materials that make it possible to reproduce a paper. This package may contain data, code, or documentation. If you are unable to independently locate the reproduction package for your paper, you can ask the paper’s author for it (find guidance on this in Chapter 7) or simply choose another candidate paper. If you still want to explore the reproducibility of a paper with no reproduction package, these guidelines provide instructions for requesting materials from authors to create a public reproduction package, or if this proves unsuccessful, for building your reproduction package from scratch.

To avoid duplicating the efforts of others who may be interested in reproducing one of your candidate papers, we ask that you record your candidate papers in the SSRP.

Note that in this stage, you are not expected to review the reproduction materials in detail, as you will dedicate most of your time to this in later stages of the exercise.

1.1 From candidate to declared paper

At this point of the exercise, you are only verifying the availability of (at least) one reproduction package and not assessing the quality of its content. Follow the steps below to verify that a reproduction package is available, and stop whenever you find it (this may mean that you have found your declared paper).

- Check whether previous reproduction attempts have been recorded in the SSRP for the paper.

- Check the journal or publisher’s website, looking for materials named “Data and Materials,” “Supplemental Materials,” “Reproduction/Replication Package/Materials,” etc.

- Look for links in the paper (review the footnotes and appendices).

- Review the personal websites of the paper’s author(s).

- Contact the author(s) to request the reproduction package using this email template. In this and future interactions with authors, we encourage you to follow our guidance outlined in Chapter 7.

- Deposit the reproduction package in a trusted repository (e.g., Dataverse, Open ICPSR, Zenodo, or the Open Science Framework) under the name

Original reproduction package for - Title of the paper. You will be asked to provide the URL of the repository in Survey 1.

In case you need to contact the authors, make sure to allocate sufficient time for this step (we suggest at least three weeks before the date you plan to start the reproduction). Instructors should also plan to accordingly (e.g., if the ACRE exercise is expected to take place in the middle of the semester, students should review candidate papers and (if applicable) contact the authors in the first few weeks of the semester).

Review the decision tree (Figure 1.1) below for a more detailed overview of this process. Remember, if at any step of the process you decide to abandon the paper, make sure to record the candidate paper in the SSRP before moving on to another candidate paper. Once you have obtained the reproduction package, the candidate paper becomes your declared paper and you can move forward with the exercise! Do not invest time in doing a detailed read of any paper until you are sure that it is your declared paper.

1.1.1 Candidate paper entries in the SSRP

If the SSRP contains previous reproduction attempts of the paper, you will see a report card with the following information:

Box 1: Summary Report Card for SSRP Paper Entry

Title: Sample Title

Authors: Jane Doe & John Doe

Original Reproduction Package Available: Yes (link)/No.

[If “No”] Contacted Authors?: Yes/No

[If “Yes(contacted)”] Type of Response: Categories (6).

Additional Reproduction Packages: Number (eg., 2)

Authors Available for Further Questions for SSRP Reproductions: Yes/No/Unknown

If after taking steps 1-5 above (or for some other reason) you are unable to locate the reproduction package, record your candidate paper (and if applicable, the outcome of your correspondence with the original authors) in the SSRP database following the example above.

Figure 1.1: Decision tree to move from candidate to declared paper

1.2 Identifying the relevant timeline

Before you begin working on the four main stages of the reproduction exercise (Scoping, Assessment, Improvement, and Robustness), it is important to manage your own expectations and those of your instructor or advisor. Be mindful of your time limitations when defining the scope of your reproduction activity. These will depend on the type of exercise chosen by your instructor or advisor and may vary from a weeklong homework assignment, to a semester-long project (an undergraduate thesis, for example).

Table 1 shows an example distribution of time across three different reproduction formats. The Scoping and Assessment stages are expected to last roughly the same amount of time across all formats (lasting longer for the semester-long activities as less experienced researchers, such as undergraduate students, may need more time). Differences emerge in the distribution of time for the last two main stages: Improvements and Robustness. For shorter exercises, we recommend avoiding any possible improvements to the raw data (or cleaning code). This will limit how many robustness checks are possible (for example, by limiting your ability to reconstruct variables according to slightly different definitions), but it should leave plenty of time for testing different specifications at the analysis level.

|

2 weeks

(~10 days) |

1 month

(~20 days) |

1 semester

(~100 days) |

||||

|---|---|---|---|---|---|---|

|

Stages of

Reproduction |

analysis

data |

raw

data |

analysis

data |

raw

data |

analysis

data |

raw

data |

|

Scoping

|

10% (1 day)

|

10% (2 day)

|

10% (10 days)

|

|||

|

Assesment

|

35%

|

25%

|

15%

|

|||

|

Improvement

|

25%

|

0%

|

40%

|

20%

|

30%

|

|

|

Robustness

|

25%

|

5%

|

25%

|

25%

|

||

1.3 Potential sources of papers to prioritize

1.3.1 Prioritize based on higher likelihood of reproduction

To curate a list of target papers in economics, instructors can consult recently published articles in the journals of the American Economic Association (AEA), especially articles published since 2019, which are more likely to contain reproduction packages given a change in the AEA Data and Code Availability Policy that took effect that year. In political science, target papers can be found in the American Journal of Political Science, especially those published after 2016, which are also likely to contain reproduction packages. In other social science disciplines, instructors can focus on journals that score 2 or above in data, code, and materials transparency in the Transparency and Openness Promotion (TOP) Factor (see scores here), maintained by the Center for Open Science.

1.3.2 Prioritize based on proxies of paper impact within a field

Instructors and reproducers could prioritize papers based on their impact in their field. We suggest that an explicit criteria should be specified in order to define a pool of “high impact” papers. Here we demonstrate one such criteria for the field of Economics.

- Go to Google Scholar metrics

- Select top publications

- Click on categories and select Business, Economics & Management. Then select in subcategories, select Economics

- Exclude the Journal of Economics Perspectives (a review journal that does little computational analysis).

- Focus on the 10 journals with highest h5-index. In August 4th, 2021, this yields:

- American Economic Review

- The Quarterly Journal of Economics

- The Review of Financial Studies

- Journal of Political Economy

- The Journal of Finance

- Econometrica

- The Review of Economic Studies

- Review of Economics and Statistics

- The Economic Journal

- Journal of Public Economics

- For each journal click on the hyperlinked h5-index number (sort highest to lowest).

- Select the first 10 articles in the list as sorted by citations. For example, for the American Economic Review, this procedure yields the following articles:

- The Effects of Exposure to Better Neighborhoods on Children: New Evidence from the Moving to Opportunity Experiment

- Railroads of the Raj: Estimating the Impact of Transportation Infrastructure

- The Race between Man and Machine: Implications of Technology for Growth, Factor Shares, and Employment

- The Surprisingly Swift Decline of US Manufacturing Employment

- Monetary Policy According to HANK

- The Determinants and Welfare Implications of US Workers’ Diverging Location Choices by Skill: 1980-2000

- Disruptive Change in the Taxi Business: The Case of Uber

- Bartik Instruments: What, When, Why, and How

- Importing Political Polarization? The Electoral Consequences of Rising Trade Exposure

- Long-Run Impacts of Childhood Access to the Safety Net

- The Effects of Exposure to Better Neighborhoods on Children: New Evidence from the Moving to Opportunity Experiment

- Starting from the top: select a paper and read the abstract to verify that there is a computational analysis. Discard if there is not.

- If there is, search socialsciencereproduction.org to verify that there is a recorded reproduction.

- If there is, you can create a new reproduction or move to the next paper in the list. If there is no reproduction recorded, create one.